Your AI is smart. But smart isn't enough when real money, real patients,

or real machines are on the line.

"AI agents are slow and unreliable"

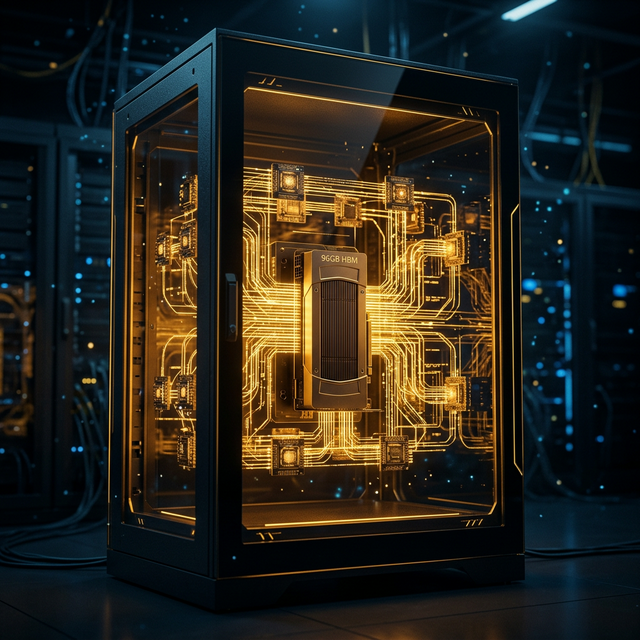

Sub‑Millisecond GPU Decisions

SignalBrain‑OS runs everything on local GPU silicon. Decisions happen in microseconds, not seconds of cloud API ping‑pong.

"We can't trust what our agents do"

Deterministic & Replayable

Same input → same fingerprint → same decision. Every choice is replayable with tamper‑evident audit logs built into the kernel.

"Governance is an afterthought"

Policy Compiled Into the Kernel

Apex17 turns company policies into a restricted Action DSL enforced at the kernel level — before any real‑world action executes. Not a dashboard toggle.

"Locked into one vendor’s models"

LLM‑Agnostic. Your Models, Your Choice.

Run local models (Llama, Mistral) on your GPU—or connect cloud APIs (GPT‑5, Claude, Gemini). SignalBrain‑OS is the runtime, not the model. Swap LLMs without changing a line of policy code.

"Different domains need different stacks"

One Kernel. Every Domain.

Same H₀ topological backbone, adapted with domain‑specific adapters. Markets, healthcare, robotics, defense, cybersecurity — one kernel runs them all.

"Tokens are expensive at scale"

Zero Per‑Token Cost. Run on Your Own GPU.

Cloud API tokens add up fast—$10K/mo, $100K/mo, more. SignalBrain‑OS runs inference locally on your GPU. Once the hardware is paid for, every decision is free. No metered API. No surprise bills.

"Our AI hallucinated and executed it"

Hallucination‑Proof Execution.

LLMs hallucinate. That's a fact. But in SignalBrain‑OS, no hallucination can become an action. Apex17 compiles every AI intent into a restricted Action DSL—if it doesn't pass the policy gate, it doesn't execute. Period.

"Our data is raw and unstructured"

Universal Signal Intent (USI) Format.

SignalBrain‑OS doesn't process raw text—it converts all data into a structured signal format (USI) that the kernel can reason over deterministically. Market ticks, LiDAR points, CT scans, NetFlow packets—one encoding, one pipeline.